Casting light on lending decisions

A cross-disciplinary sprint to make AI-driven lending decisions legible to the people most affected by them.

During BrainStation's sprint, I joined a team of six to tackle a real-world challenge. The starting point: a broad "How Might We" around AI transparency and human oversight. This could have taken us anywhere. We chose to aim it directly at one of the most consequential and least transparent experiences in American financial life: the mortgage approval process.

We began with the sprint's original HMW prompt: "How might we help distributed teams turn AI-generated outputs into trustworthy, actionable decisions without losing human oversight?" It was broad by design. Our job was to find a human reality inside it.

When we applied a fintech lens, one question kept surfacing: who are the most vulnerable stakeholders in an AI-driven decision? Not the lenders, who have access to the model logic. Not the analysts, who can interrogate the data. The most vulnerable person is the one sitting on the other side of a rejection letter with no explanation attached.

In 2024 the mortgage loan rejection rate nearly doubled what it was in 2019.

Yet most applicants never understand why. The algorithm that decided their future remains invisible to them.

To keep our design grounded, we developed a target user to represent the person at the center of this problem.

Sam is nervous, not unintelligent. She doesn't need the system dumbed down, she needs it made visible. That distinction shaped everything we built.

Now that we knew our user better, we could refine our How Might We statement:

"How might we give nervous mortgage applicants the same visibility into AI decision-making that lenders already have, so the approval process becomes a conversation rather than a black box?"

In language applicants use. Not financial jargon. Not a credit score with no context. Plain language, clear variables, and real insight into what the model is actually weighing.

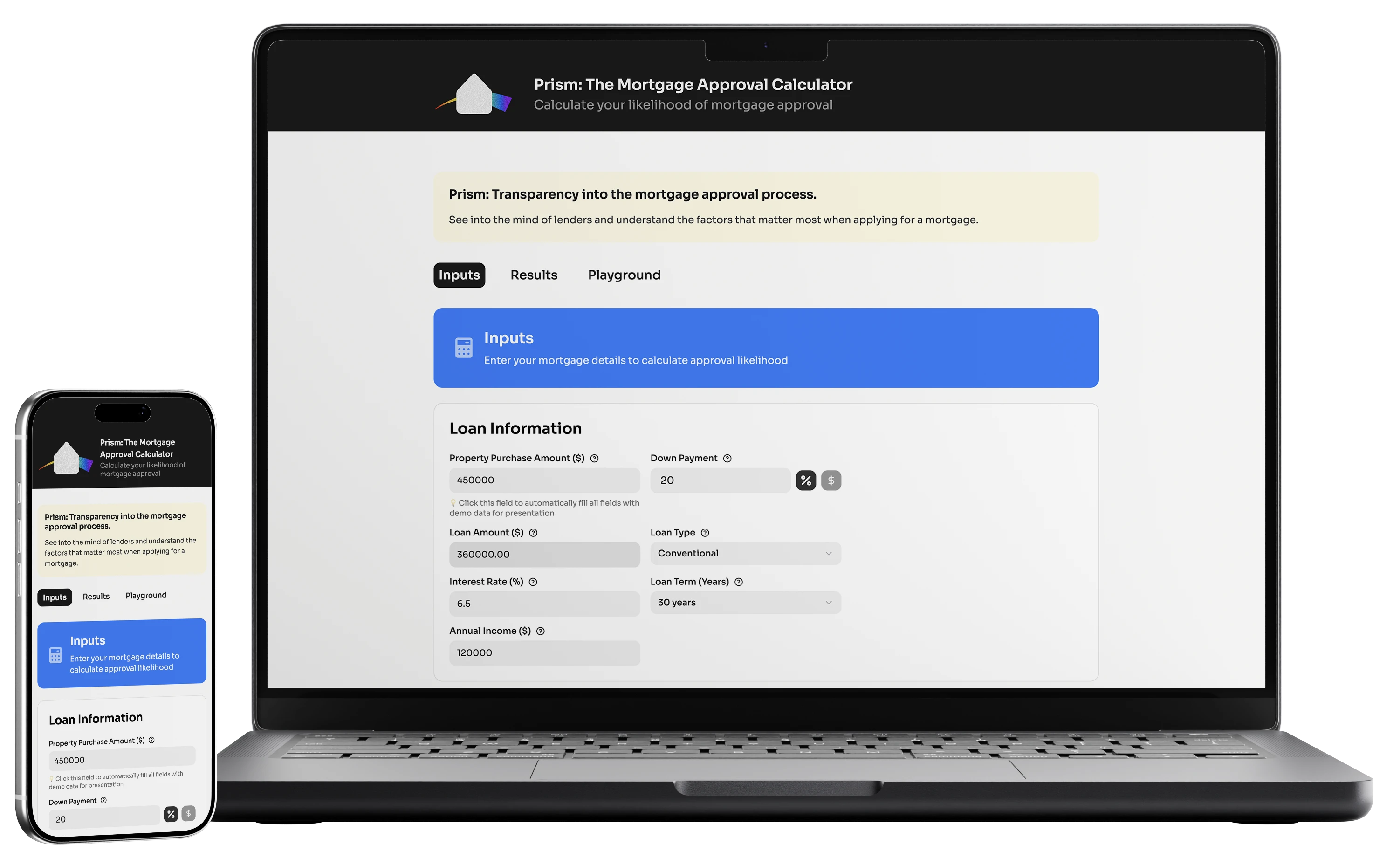

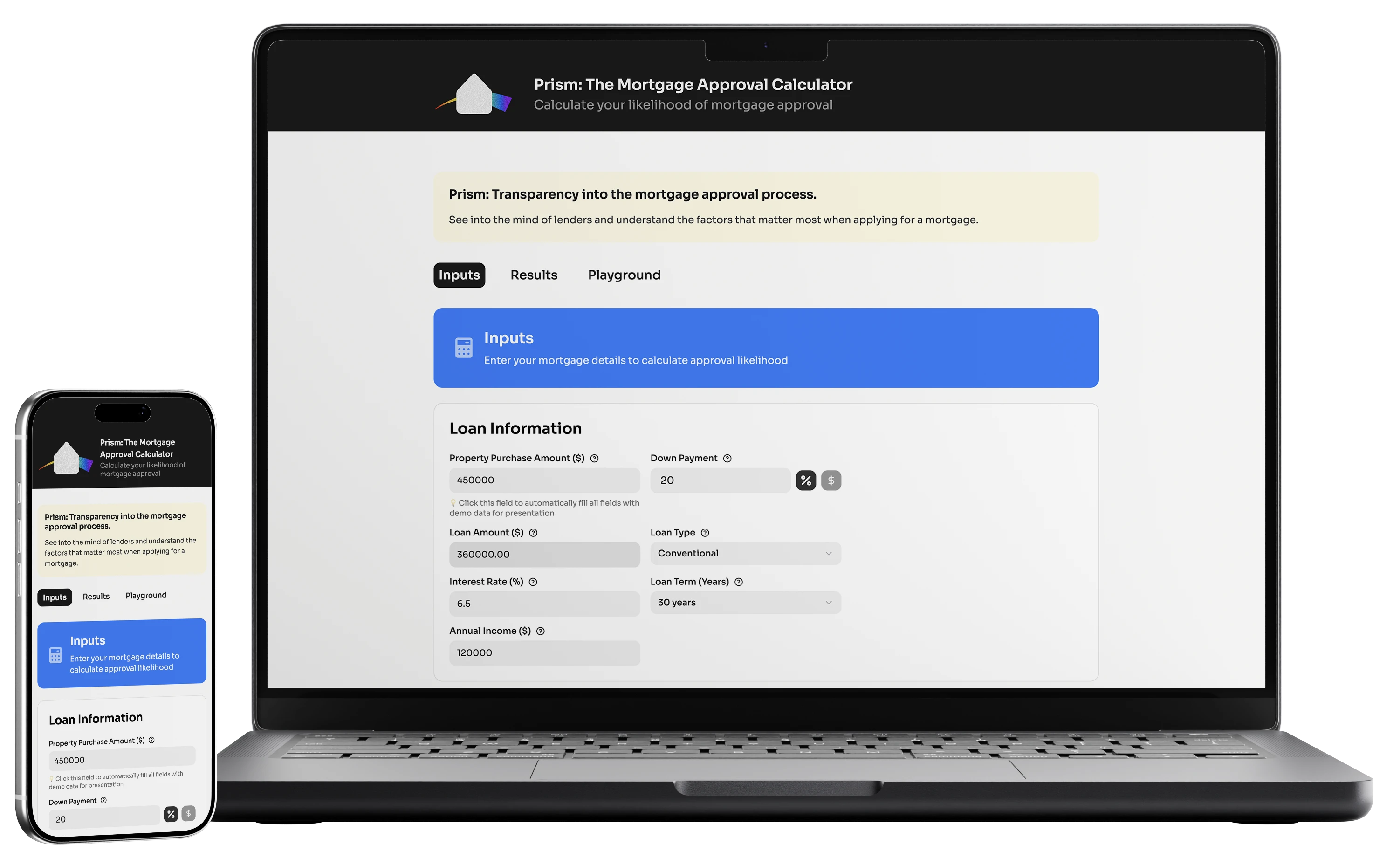

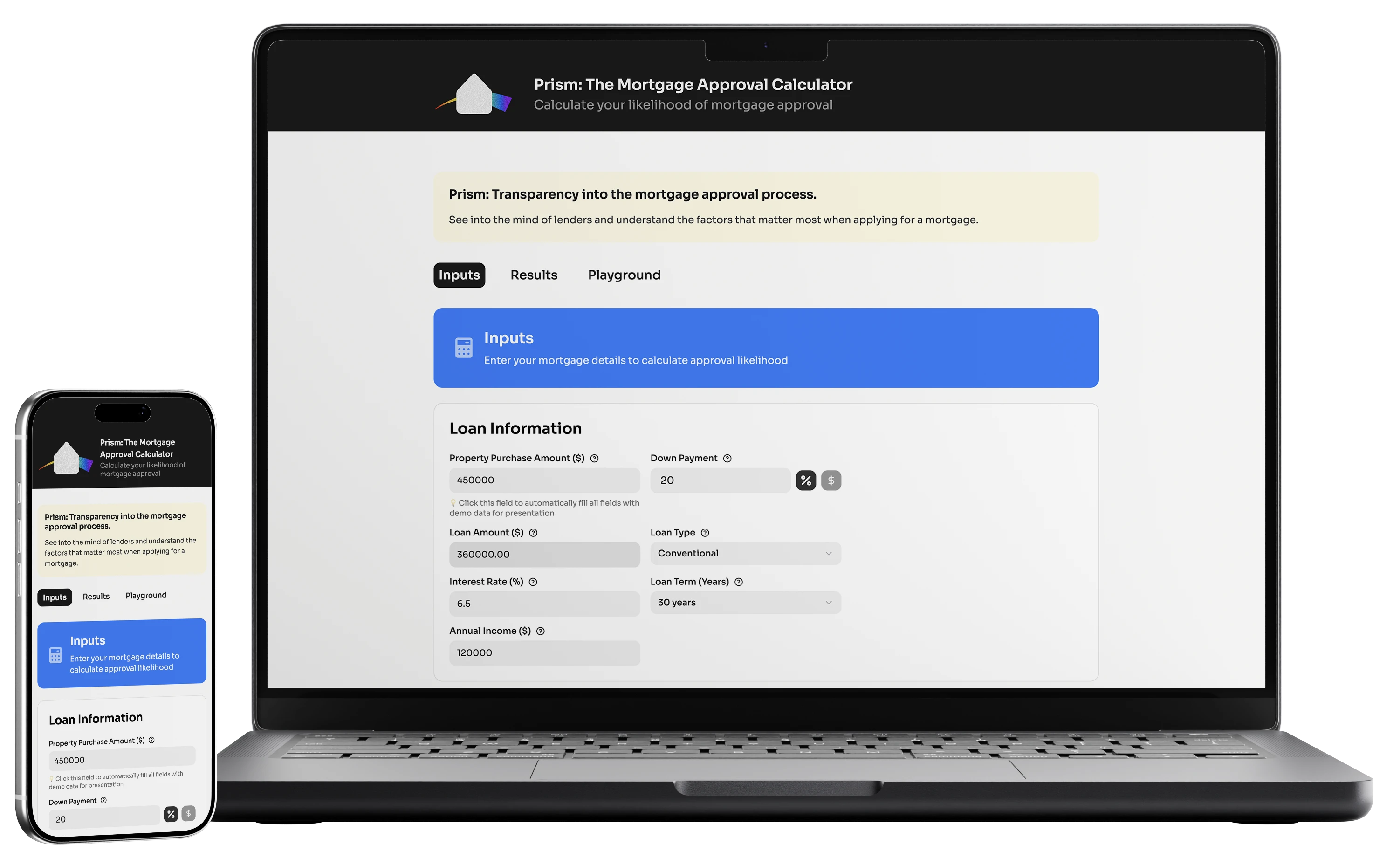

Built on real HMDA data and trained on over 4.6 million applications, Prism lets applicants enter their financial picture, see their approval likelihood, and — crucially — explore how changing any single variable shifts their odds in real time. The approval process stops being a verdict and starts being a conversation.

Describing

design is one thing.

Feeling it, is another.

Note: this is a prototype built in Figma Make. It does not contain the trained AI dataset we presented at the end of the hack-a-thon.

Our data scientists led the research into how mortgage AI systems actually work.

They drew on HMDA (Home Mortgage Disclosure Act) data and published literature on explainable AI in financial services. What emerged was a clear structural picture of why people like Sam are left in the dark.

Our research spanned peer-reviewed literature on explainable AI in financial services, FCA guidance on AI in credit decisions, and HMDA datasets covering millions of real applications. Perplexity and Claude were used to synthesize sources, but all findings were human-verified by myself and our data scientists.

Mortgage AI engines are opaque gatekeepers

Consumers and their advisors are systematically excluded from lending systems until the moment they submit a high-stakes application. There's no preview, no simulation, no rehearsal — just a verdict.

A "data hostage" situation

Teams can't collaboratively interrogate AI logic or provide human oversight to correct data nuances before a decision is made. The model has already run. The window to intervene is closed.

Blind financial leaps that erode trust

Without the ability to simulate outcomes before applying, applicants must either take a shot in the dark or disengage from the process entirely. Both outcomes harm them — and harm lenders who want qualified borrowers in the pipeline.

The product is built on real HMDA data, trained on a logistic regression model covering over 4.6 million applications, and designed to feel more like a conversation than a calculator.

feature 01

Your financial picture

Users enter the key variables that drive mortgage decisions: loan amount, down payment, income, debt-to-income ratio, property value, credit score, and loan type. Each field includes a plain-language tooltip explaining why it matters. Nothing is hidden behind jargon.

feature 02

Your approval likelihood

Based on the HMDA-trained model, Prism returns a clear probability score alongside a breakdown of which factors are working in the applicant's favor and which are working against them. The goal is not just a number — it's understanding.

feature 03

The "What if?" sandbox

This is the heart of Prism. Users can adjust any variable — income, down payment, credit score, loan term — and see their approval likelihood update in real time. A "Scenario Impact Summary" shows exactly what changed. This transforms a scary one-time submission into an ongoing, exploratory conversation.

Prism in its sprint form is a proof of concept — and a strong one. The model works, the interface is functional, and the core UX argument holds: when applicants can see inside the process, it stops being a black box and starts being something they can prepare for.

The design decisions that matter most for business impact are the ones that reduce application drop-off from anxiety. Users like Sam don't apply because they can't afford a home — they don't apply because they're afraid of feeling embarrassed. A tool that lets her rehearse privately before she ever talks to a broker, fundamentally changes that dynamic.